In our previous article, we delved into the details of running LLaMa Chat as console application. Now, it's time to take a leap forward with LLaMa 2, the current evolution in the world of open large language models. In this article, we'll provide a guide how to setup LLaMa 2 and demonstrate how to connect it with our AIME-API-Server, opening up new possibilities for deploying conversational AI experiences.

• How to run Llama 3 with AIME API to Deploy Conversational AI Solutions

At AIME, we understand the importance of providing AI tools as services to harness the full potential of technologies like LLaMa 2. That's why we're excited to announce the integration of LLaMa 2 with our new AIME-API, providing developers with a streamlined solution for providing its capabilities as a scalable HTTP/HTTPS service for easy integration with client applications. Whether you're building chatbots, virtual assistants, or interactive customer support systems, this integration offers flexibility to make modifications and adjustments to the model and the scalability to deploy such solutions.

Our LLaMa2 implementation is a fork from the original LLaMa 2 repository supporting all LLaMa 2 model sizes: 7B, 13B and 70B.

Our fork provides the possibility to convert the weights to be able to run the model on a different GPU configuration than the original LLaMa 2 (see table 2).

With the integration in our AIME-API and its batch aggregation feature, it is possible to leverage the full possible inference performance of different GPU configurations by aggregating requests to be processed in parallel as batch jobs by the GPUs. This dramaticly increases the achievable throughput of chat requests.

We also benchmarked the different GPU configurations with this technology to guide the decision which hardware gives best throughput performance to process LLaMa requests.

Hardware Requirements

Although the LLaMa2 models were trained on a cluster of A100 80GB GPUs it is possible to run the models on different and smaller multi-GPU hardware for inference.

In table 1 we list a summary of the minimum GPU requirements and recommended AIME systems to run a specific LLaMa 2 model with realtime reading performance:

| Model | Size | Minimum GPU Configuration | Recommended AIME Server | Recommended AIME Cloud Instance |

|---|---|---|---|---|

| 7B | 13GB | 1x Nvidia RTX A5000 24GB or 1x Nvidia RTX 4090 24GB | AIME G400 Workstation | V10-1XA5000-M6 |

| 13B | 25GB | 2x Nvidia RTX A5000 24GB or 1x NVIDIA RTX A6000 | AIME A4000 Server | V10-2XA5000-M6, v14-1XA6000-M6 |

| 70B | 129GB | 2x Nvidia A100 80GB, 4x Nvidia RTX A6000 48GB or 8x Nvidia RTX A5000 24GB | AIME A8000 Server | V28-2XA180-M6, C24-4X6000ADA-Y1, C32-8XA5000-Y1 |

A detailed performance analysis of different hardware configurations can be found in the section "LLaMa2 Inference GPU Benchmarks" of this article.

Getting Started: How to Deploy LLaMa2-Chat

In the following we show how to setup, get the source, download the LLaMa 2 models, and how to run LLaMa2 as console application and as worker for serving HTTPS/HTTP requests.

Create an AIME ML Container

Here are instructions for setting up the environment using the AIME ML container management. Similar results can be achieved by using Conda or alike.

To create a PyTorch 2.1.2 environment for installation, we use the AIME ML container, as described in aime-ml-containers using the following command:

> mlc-create llama2 Pytorch 2.1.2-aime -w=/path/to/your/workspace -d=/destination/of/the/checkpointsThe -d parameter is only necessary if you don’t want to store the checkpoints in your workspace but in a specific data directory. It is mounting the folder /destination/of/the/checkpoints to /data in the container. This folder requires at least 250 GB of free storage to store (all) the LLaMa models.

Once the container is created, open it with:

> mlc-open llama2You are now working in the ML container being able to install all requirements and necessary pip and apt packages without interfering with the host system.

Clone the LLaMa2-Chat Repository

LLaMa2-Chat is a forked version of the original LLaMa 2 reference implementation by AIME, with following added features:

- Tool for converting the original model checkpoints to different GPU configurations

- Improved text sampling

- Implemented token-wise text output

- Interactive console chat

- AIME API Server integration

Clone our LLaMa2-Chat repository with:

[llama2] user@client:/workspace$

> git clone https://github.com/aime-labs/llama2_chatNow install the required pip packages:

[llama2] user@client:/workspace$

> pip install -r /workspace/llama2_chat/requirements.txtDownload LLaMa 2 Model Checkpoints

From Meta

In order to download the model weights and tokenizer, you have to apply for access by meta and accept their license. Once your request is approved, you will receive a signed URL via e-mail. Make sure you have wget and md5sum installed in the container:

[llama2] user@client:/workspace$

> sudo apt-get install wget md5sumThen run the download.sh script with:

[llama2] user@client:/workspace$

> /workspace/download.shPass the URL provided when prompted to start the download. Keep in mind that the links expire after 24 hours and a certain amount of downloads. If you start seeing errors such as `403: Forbidden`, you can always re-request a link.

From Huggingface

To download the checkpoints and tokenizer via Huggingface you also have to apply for access by meta and accept their license and register at huggingface with the same email address as your granted meta access.

Install git lfs in the container to be able to clone repositories with large files by:

[llama2] user@client:/workspace$

> sudo apt-get install git-lfs

> git lfs installThen download the checkpoints of the 70B model with:

[llama2] user@client:/workspace$

> cd /destination/to/store/the/checkpoints

[llama2] user@client:/destination/to/store/the/checkpoints$

> git lfs clone https://huggingface.co/meta-llama/Llama-2-70b-chatTo download the other model sizes, replace Llama-2-70b-chat with Llama-2-7b-chat or Llama-2-13b-chat. The download process might take a while depending on your internet connection speed. For the 70B model 129GB are to be downloaded. You will be asked twice for username and password. As password you have to use an access token generated in the Huggingface account settings.

Convert the Checkpoints to your GPU Configuration

The downloaded checkpoints are designed to only work with certain GPU configurations: 7B with 1 GPU, 13B with 2 GPUs and 70B with 8 GPUs. To run the model on a different GPU configuration, we provide a tool to convert the weights respectively. Table 2 shows all supported GPU configs.

| Model size | Num GPUs 24GB | Num GPUs 40GB | Num GPUs 48GB | Num GPUs 80GB |

|---|---|---|---|---|

| 7B | 1 | 1 | 1 | 1 |

| 13B | 2 | 1 | 1 | 1 |

| 70B | 8 | 4 | 4 | 2 |

The converting can be started with:

[llama2] user@client:/workspace$

> python3 /workspace/llama2_chat/convert_weights.py --input_dir /destination/to/store/the/checkpoints --output_dir /destination/to/store/the/checkpoints --model_size 70B --num_gpus <num_gpus>The converting will take some minutes depending on CPU and storage speed and model size.

Run LLaMa 2 as Interactive Chat in the Terminal

To try the LLaMa2 models as a chat bot in the terminal, use the following PyTorch script and specify the desired model size to use:

[llama2] user@client:/workspace$

> torchrun --nproc_per_node <num_gpus> /workspace/llama2_chat/chat.py --ckpt_dir /data/llama2-model/llama-2-70b-chatThe chat mode is simply initiated by giving the following context as the starting prompt. It sets the environment so that the language model tries to complete the text as a chat dialog:

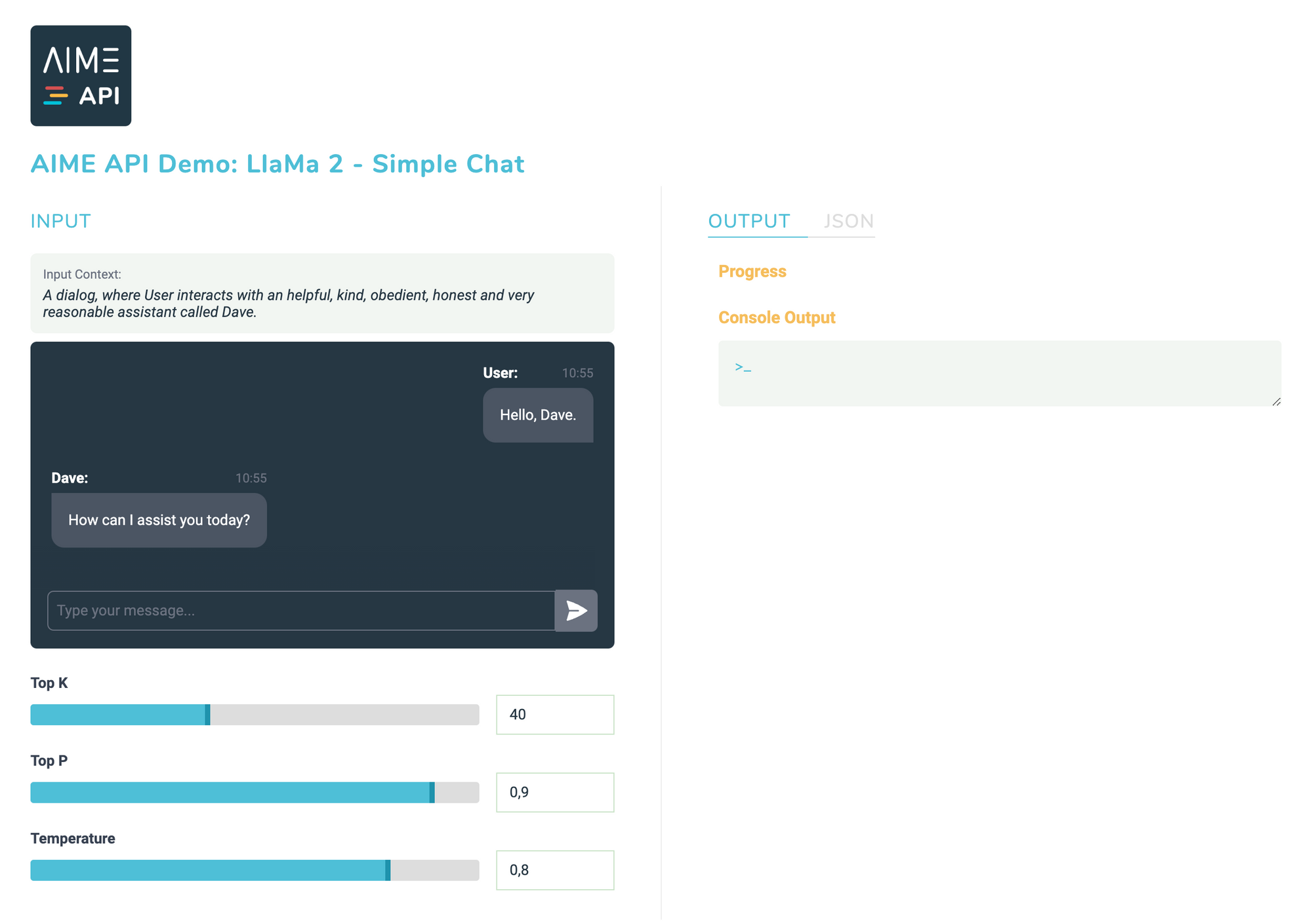

A dialog, where User interacts with an helpful, kind, obedient, honest and very reasonable assistant called Dave.

User: Hello, Dave

Dave: How can I assist you today?

Now the model acts as a simple chat bot. The starting prompt influences the mood of the chat answers. In this case, he credibly fills the role of a helpful assistant and does not leave it again without further ado. Interesting, funny to useful answers emerge - depending on the input texts.

Run LLaMa 2 Chat as Service with the AIME API Server

Setup AIME API Server

To read about how to setup and start the AIME API Server please see the setup section in the documentation.

Start LLaMa2 as API Worker

To connect the LLama2-chat model with the AIME-API-Server ready to receive chat requests through the JSON HTTPS/HTTP interface, simply add the flag --api_server followed by the address of the AIME API Server to connect to:

[llama2] user@client:/workspace$

> torchrun --nproc_per_node <num_gpus> /workspace/llama2_chat/chat.py --ckpt_dir /data/llama2-model/llama-2-70b-chat --api_server https://api.aime.info/Now the LLama 2 model acts as a worker instance for the AIME API Server ready to process jobs ordered by clients of the AIME API Server.

For a documentation on how to send client requests to the AIME API Server please read on here.

Try out LLaMa2 Chat with AIME API Demonstrator

The AIME-API-Server offers client interfaces for various programming languages. To demonstrate the power of LLaMa 2 with the AIME API Server, we provide a fully working LLaMa2 demonstrator using our Java Script client interface.

LLaMa 2 Inference GPU Benchmarks

To measure the performance of your LLaMA 2 worker connected to the AIME API Server, we developed a benchmark tool as part of our AIME API Server to simulate and stress the server with the desired amount of chat requests. First install the requirements with:

user@client:/workspace/aime-api-server/$

> pip install -r requirements_api_benchmark.txtThen the benchmark tool can be started with:

user@client:/workspace/aime-api-server/$

> python3 run_api_benchmark.py --api_server https://api.aime.info/ --total_requests <number_of_requests>run_api_benchmark.py will send as many requests as possible in parallel and up to <number_of_requests> chat requests to the API server. Each requests starts with the initial context "Once upon a time". LLaMa2 will generate, depending on the used model size, a story of about 400 to 1000 tokens length. The processed tokens per second are measured and averaged over all processed requests.

Results

We show the results of the different LLaMa 2 model sizes and GPU configurations. The model is loaded in such a way that it can use the GPUs in batch mode. In the bar charts the maximum possible batch size, which equals the parallel processable chat sessions, is stated below the GPU model and is directly related to the available GPU memory.

The results are shown as total possible throughput in tokens per second and the smaler bar shows the tokens per second for an individual chat session.

The human (silent) reading speed is about 5 to 8 words per second. With a ratio of 0.75 words per token, it is comparable to a required text generation speed of about 6 to 11 tokens per second to be experienced as not to slow.

LLaMa 2 7B GPU Performance

LLaMa 2 13B GPU Performance

LLaMa 2 70B GPU Performance

Conclusion

The integration of LLaMa 2 within AIME API and its simple and fast setup opens up a scalable solution for developers looking to deploy their conversational AI solutions. By deploying LLaMa 2 with the AIME API Server you can build advanced applications such as chatbots, virtual assistants and interactive customer support systems.

The hardware requirements for running LLaMa 2 models are flexible, allowing for deployment on various distributed GPU configurations and extendable setups or infrastructure to serve thousands of requests. Even the minimum GPU requirements or smallest recommended AIME systems for each model size ensure a more than real-time reading response performance for a seamless multi-user chat experience.

• How to run Llama 3 with AIME API to Deploy Conversational AI Solutions